But this time the villagers, who had been fooled twice before, thought the boy was again deceiving them, and nobody stirred to come to his help. So the Wolf made a good meal off the boy’s flock, and when the boy complained, the wise man of the village said: “A liar will not be believed, even when he speaks the truth.”

The Shepherd’s Boy, Aesop’s Fables

Over the years, in my tenure as a manager, I went through several iterations of “What exactly is my role?” Various answers have appeared: mentor, disciplinarian, maker of lists, taskmaster, procurer of take-out food, motivational speaker, and he-who-should-just-get-out-of-the-way.

What exactly I was managing seemed highly contextual, fluid, and often fleeting: this week’s challenges were not like last week’s. Therefore, a flexible approach seemed important. However, sometimes I would discover a management principle that would turn out to be long-lived. While I was in charge of a group of systems administrators, I had a powerful epiphany. For them, I realized the thing I was actually managing was their attention span. Indeed, I found it was a very precious commodity.

(image: Library of Congress)

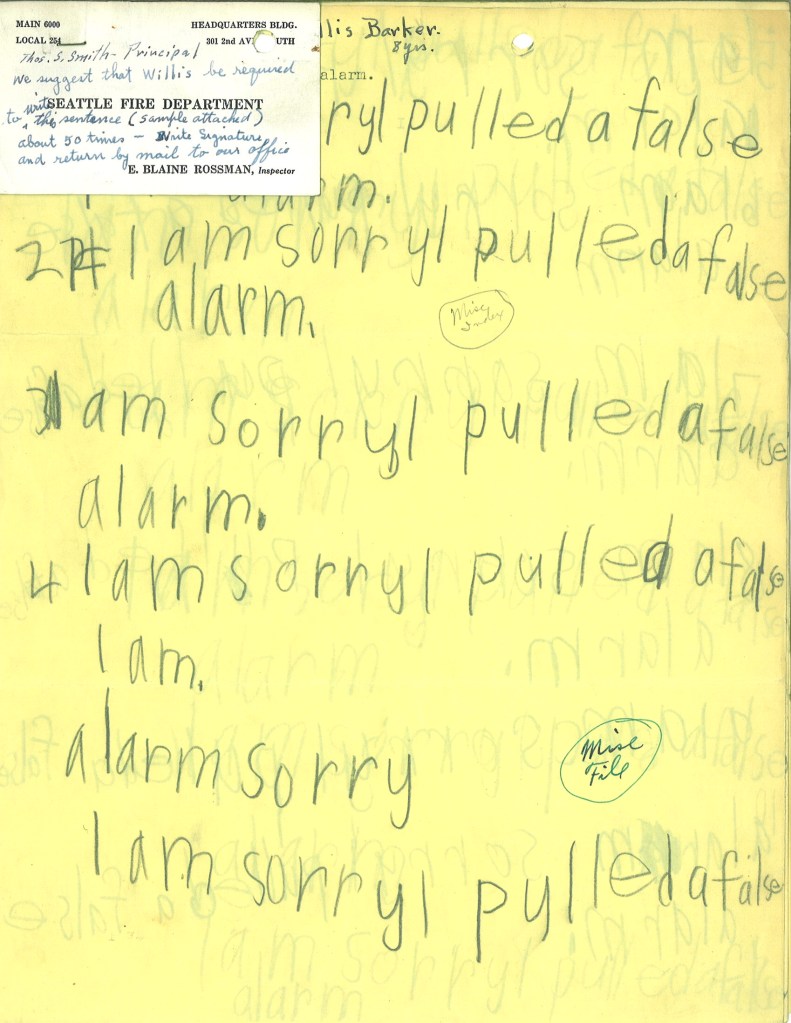

False Alarms

At Discovery Mining, the systems group maintained the infrastructure that sustained a very complicated web site. In line with our mantra “know before the client knows,” we implemented an extensive monitoring system. If something bad was going to happen, we wanted to to be aware of it, proactively meeting it head on. To that end, we automatically kept track of thousands of aspects of the systems under our care. The level of detail was impressive: within an individual server we could tell if a specific disk drive was working or the speed of its cooling fans.

You can imagine that monitoring all this minutiae produced a staggering amount of data. We did our best to make sure all our records were well-organized and graphically represented, so they could be quickly consulted on an as-needed basis. However, in the case of an emergency, our monitoring system was designed to grab our attention by lighting up our phones, pagers, and email inboxes.

Tuning our alerting system was a never-ending struggle, and perfectly illustrates the theme of this article. That’s because not every alert turned out to be indicative of a real problem. There were many moments when our monitoring system morphed into that boy who cried wolf, with my staff playing the role of the circumspect villagers.

(image: US Patent 3472195)

Normalization Of Deviance

Diane Vaughan, a professor of sociology at Columbia University, studied the Challenger space shuttle disaster in depth. Her resulting book, The Challenger Launch Decision, introduces an interesting concept called the normalization of deviance:

Social normalization of deviance means that people within the organization become so much accustomed to a deviant behavior that they don’t consider it as deviant, despite the fact that they far exceed their own rules…

Diane Vaughan 1

I think this problem is richly relevant for those that troubleshoot professionally: by definition, front-line technicians deal with “deviant behaviors” (aka, malfunctions) on a constant basis. When broken becomes part of your daily routine, it will reset your sense of normal. Likewise, the warning systems that troubleshooters rely upon are subject to these same psychological factors: too many false signals will eventually be ignored, just like the shepherd boy.

Homo sapiens has been remarkably adaptive, finding a way to survive in deserts, jungles, mountains, prairies, and the frozen tundra. Eking out a living in a wide variety of climates, terrains, and social conditions is a noble part of our humanity. This ability is surely aided by various psychological coping mechanisms: if you are thrown into an unfavorable environment, the ability to reset your expectations, focusing on what “success” means in the new context, is a must.

But alas, virtues can also be vices: because our expectations are elastic, they can be ratcheted up indefinitely in a never-ending exercise of “keeping up with the Joneses.” If you’ve ever spent time on the hedonic treadmill, you know that a constantly moving target of success can feel unsatisfying; this is why people crave experiences that restore a sense of perspective to their lives.

Those same human capabilities that favor adaptation appear to be at work when it comes to the normalization of deviance. Vaughn eloquently explains the process:

Signals of potential danger tend to lose their salience because they are interjected into a daily routine full of established signals with taken-for-granted meanings that represent the well-being of the relationship. A negative signal can sometimes become simply a deviant event that mars the smoothness of the ongoing routine. As the initiator’s unhappiness grows, the number, frequency, and seriousness of signals of potential danger increase. While they would surely catch the partner’s attention if they came all at once, this is not the case when new signals are introduced slowly amid others that indicate stability. A series of discrepant signals can accumulate so slowly that they become incorporated into the routine; what begins as a break in the pattern becomes the pattern. Small changes—new behaviors that were slight deviations from the normal course of events—gradually become the norm, providing a basis for accepting additional deviance.

Diane Vaughan, The Challenger Launch Decision 2

I definitely observed this process in action with my team. Over the course of a typical day, a systems administrator would receive many “signals” that included phone calls, emails, project managers stopping by to ask for updates, colleagues asking for help, etc. This “daily routine full of established signals” would be mixed with messages generated by our monitoring system. Within this context, evidence of deviations can accumulate slowly. Every new alert can subtly move your mental anchor, integrating it as the “new normal.”

Alert Fatigue

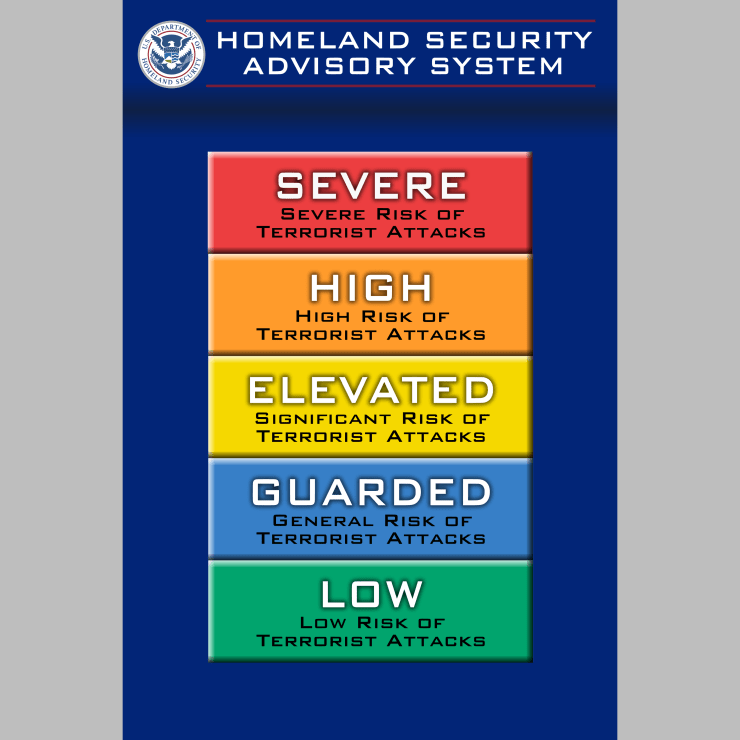

Let’s go back to the early days of the Homeland Security Advisory System. I remember making travel plans when this scheme was in place:

(image: Wikimedia Commons)

If the threat level was blue or yellow or orange, what exactly was I supposed to do about it? Not travel at all? The most frustrating thing about this advisory system was the lack of specifics. The precise nature of the threats underlying the various levels were a secret, making it hard to assess your own situation. Most importantly, what particular course of action should be taken in response to a given threat level?

Using the DHS’ “Chronology of Changes to the Homeland Security Advisory System,”3 I filled in a table with the time spent at the various threat levels*:

| Start | End | Days | Threat Level |

| Mar 12, 2002 | Sep 9, 2002 | 182 | YELLOW |

| Sep 10, 2002 | Sep 23, 2002 | 14 | ORANGE |

| Sep 24, 2002 | Feb 6, 2003 | 136 | YELLOW |

| Feb 7, 2003 | Feb 26, 2003 | 20 | ORANGE |

| Feb 27, 2003 | Mar 16, 2003 | 18 | YELLOW |

| Mar 17, 2003 | Apr 15, 2003 | 30 | ORANGE |

| Apr 16, 2003 | May 19, 2003 | 34 | YELLOW |

| May 20, 2003 | May 29, 2003 | 10 | ORANGE |

| May 30, 2003 | Dec 20, 2003 | 205 | YELLOW |

| Dec 21, 2003 | Jan 8, 2004 | 19 | ORANGE |

| Jan 9, 2004 | Jul 31, 2004 | 205 | YELLOW |

| Aug 1, 2004 | Nov 9, 2004 | 101 | ORANGE |

| Nov 10, 2004 | Jul 6, 2005 | 239 | YELLOW |

| Jul 7, 2005 | Aug 11, 2005 | 36 | ORANGE |

| Aug 12, 2005 | Aug 9, 2006 | 363 | YELLOW |

| Aug 10, 2006 | Aug 12, 2006 | 3 | RED |

| Aug 13, 2006 | Apr 20, 2011 | 1712 | ORANGE |

*The RED threat level was used only once from August 10-12, 2006: “for flights originating in the United Kingdom bound for the United States.” The rest of the USA’s commercial aviation activity was set to ORANGE for these days. For this brief split situation, I counted these days under the higher threat level (RED) set by the DHS.

Then added everything up:

| Days | % | Threat Level |

| 3 | 0.09% | RED |

| 1942 | 58.37% | ORANGE |

| 1382 | 41.54% | YELLOW |

| 0 | 0.00% | BLUE |

| 0 | 0.00% | GREEN |

| 3327 | 100.00% |

Given that the threat level was never set below “elevated” (yellow), you have to wonder about the effect of having the entire nation on alert for so long. When I added it all up, I didn’t realize that we had spent over 5 years at the orange level (“High”). That’s a long time! Five years spent doing anything will eventually become your baseline point of reference, the “new normal.” But, if there really was an imminent threat, a sense of normalcy is probably not what the designers of the system intended.

After a while, I did what millions of other Americans did: ignore the Homeland Security Advisory System. This phenomenon is called “alert fatigue” and is well known in the health care industry, often studied in the context of doctors grappling with the deluge of alerts created by medical software. Attention is a finite human commodity and there’s simply a limit to the number of alarm messages that can be processed before they are ignored and established as routine.

Mixed Messages and Vigilance

As we were monitoring thousands of details about our infrastructure at Discovery Mining, errors made configuring or tuning our alerts would also be multiplied several thousand-fold. Sometimes, the system would generate an overwhelming number of false positives. When receiving a large volume of alerts over the course of a short period of time, the tendency is to consider all the messages equally important (and therefore, unimportant!).

I physically cringed every time our monitoring system became an unrepentant peddler of falsities, as I knew it was resetting my team’s expectations for “normal.” False alarms change the meaning of the message from “this is important” to “this can be ignored.”

(image: Open Clip Art)

Speaking of meaning, be careful about overloading your alerts. The venerable Check Engine light, found on the dash of your typical car, is a great example of the problems associated with mixing messages. The Check Engine light used to be a big deal: it meant the car had a serious problem, and ignoring it could lead to “chuck engine.” However, at some point, it became fashionable for some automobile manufacturers to turn on the Check Engine light for much less urgent reasons, among them the need for scheduled maintenance.

There’s nothing wrong with bringing the need for routine maintenance or a loose gas cap to a driver’s attention, but encumbering the Check Engine light with these multiple meanings leads to all the problems previously discussed. Overloading is not quite a false alarm, but the effect is similar. Diluting a warning’s potency predictably leads to indifference, especially when the statistics favor the less urgent problems. I used to take the Check Engine light very seriously, but these additional meanings have lead me to be nonchalant about its appearance on the dash. A catastrophic engine meltdown is a comparatively rare event, so if the warning can mean either “crisis” or “get an oil change,” chances are it’s the more benign explanation. Therefore, I have reset my expectations accordingly. A better solution would be to preserve the Check Engine light’s meaning of emergency by adding a separate alert for those less critical issues.

In summary, be aware of the effects that permanent and false alerts have on your team. Always aim for a 1:1 relationship between triggered alarms and consequences felt. If your processes require constant vigilance, like those required for aviation or medicine, it’s far better to distill warnings into action-oriented processes and routines (see: checklists). Alarms should always result in doing, and it’s even better if the actions to be taken are clearly laid out and practiced beforehand.

(image: Seattle Municipal Archives / CC BY 2.0)

References:

- Header image: Dave Phillips, photographer. Retrieved from Unsplash, https://unsplash.com/photos/Q44xwiDIcns.

- 1 Consulting News Line. “Interview: Diane Vaughan” May, 2008.

- 2 Diane Vaughan. The Challenger Launch Decision: Risky Technology, Culture, and Deviance at NASA (Chicago: University of Chicago Press, 1996), pg. 414.

- 3 Department of Homeland Security, “Chronology of Changes to the Homeland Security Advisory System.”