In the relatively short span between the start of the Industrial Revolution and now, the amount of knowledge accumulated in just a single industry would take many lifetimes to fully understand. Imagine trying to know everything there is about cars or computers or petroleum extraction. These industries haven’t been around that long, but the people associated with them have been very busy!

On top of that, many machines combine innovations from a variety of sources. Think about a modern airliner, which is an amalgamation of the latest-and-greatest from a large number of other industries: high-efficiency turbine engines, lightweight composite materials, advanced computer systems for communication and navigation, etc. Within a jet is an endless list of technological advances, all cobbled together to create one awesome super-system. “Systems of systems” like this have long surpassed the ability of any one human to fully understand them. Yet, by finding ways to manage this complexity, we are still able to use technology to better our lives.

Without further ado, let me introduce the humble checklist. Within its boxes contains a method for handling complexity that is so effective (and simple) that large portions of our advanced industrial civilization would be impossible without it. At the same time, I also want to familiarize you with a great book on the subject that shows just how much we can gain from using checklists (and why). Creating a checklist is the ultimate way to communicate the results of what you’ve learned from a troubleshooting exercise. As opposed to other passively consumed forms of post-repair communication like “incident reports” and “service bulletins,” the checklist puts the best way of doing something directly into the hands of those doing the work. As you’ll see, it’s not enough to know: if facts aren’t translated into doing on a consistent basis, your learning is meaningless. Because the checklist distills information into an action-oriented form, I shed a lone tear when contemplating the beauty of its bridge between what is known and what should be done about that knowing.

(image: Bart Fields / CC BY 2.0)

So Many Things Needed To Go Right…And Luckily They Did

Atul Gawande’s The Checklist Manifesto is a call to arms for people who want to improve the world. The book begins with a compelling story showing the awesome power of advanced medical technology. Gawande recounts the details of a 3-year-old who fell into an ice-covered fishpond in the Austrian Alps. The little girl sat at the bottom of a small lake for 30 minutes, in icy cold waters, before her parents located her and pulled her out. The fight to save her life began and what unfolded can only be described as a miracle of modern medicine: a well-choreographed dance between dozens of personnel and the advanced technology they used. These professionals managed to give this young girl a second chance at life, but so much had to go right for that to happen:

To save this one child, scores of people had to carry out thousands of steps correctly: placing the heart-pump tubing into her without letting in air bubbles; maintaining the sterility of her line, her open chest, the exposed fluid in her brain; keeping a temperamental battery of machines up and running. The degree of difficulty in any one of these steps is substantial. Then you must add the difficulties of orchestrating them in the right sequence, with nothing dropped…

Atul Gawande, The Checklist Manifesto 1

Now, this little girl is living a normal life. However,

For every drowned and pulseless child rescued, there are scores more who don’t make it—and not just because their bodies are too far gone. Machines break down; a team can’t get moving fast enough; someone fails to wash his hands and an infection takes hold.

Atul Gawande, The Checklist Manifesto 1

“Too Much Plane” For One Person?

As a technical field or industry matures, it gets exponentially more complicated. Knowledge accumulates indefinitely as innovations occur (i.e, there are always new things to keep up with). Also, the number of applications for an industry’s technology tends to grow over time. For example, microchips were used in just a few applications in the 1950s, but today they are everywhere. The first internal combustion engines were deployed in cars, but today you can find them in a wide variety of uses, from lawnmowers to portable electric generators. As a technology finds new applications, the intersections with each new industry creates even more complexities. The principles of the internal-combustion engine may be a known quantity, but its use in a car, an airplane, and a lawnmower are all different and have special considerations.

(image: National Museum of the US Air Force)

For aviation, this same march of technological progress resulted in increasingly sophisticated designs. Enter stage right, the Boeing Model 299: a prototype bomber that was all set to become the go-to plane for the US Army Air Corps in 1935. The result of a design competition, the 299 trounced the offering by rival Martin & Douglas in all of the important categories: range, payload capacity, and speed. However, during a demonstration in front of the Army’s top brass, there was a terrible accident. Major Ployer P. Hill, the Air Corps’ chief of flight testing, was in command during the demonstration flight of the Model 299. After takeoff, he climbed the plane to 300 feet, where it stalled, turned towards the ground, and exploded in a giant fireball on impact. Tragically, two of the five crew members aboard died in the accident, including Major Hill.

A demonstration flight with fatalities that ends in a fiery explosion in front of the press: that isn’t just a tragedy, it’s also bad marketing. To add to the woes, the Model 299 was in the midst of a design competition and the destruction of the prototype meant Boeing was disqualified. The Army ended up choosing Martin & Douglas’ design and only a few 299’s were initially ordered. However, what happened next changed aviation forever:

Still, the Air Corps faced arguments that the aircraft was too big to handle. The Air Corps, however, properly recognized that the limiting factor here was human memory, not the aircraft’s size or complexity. To avoid another accident, Air Corps personnel developed checklists the crew would follow for takeoff, flight, before landing, and after landing. The idea was so simple, and so effective, that the checklist was to become the future norm for aircraft operations. The basic concept had already been around for decades, and was in scattered use in aviation worldwide, but it took the Model 299 crash to institutionalize its use.

“The Checklist”, Air Force Magazine 2

This point is important and bears dwelling on for a moment: if someone had to die at the controls of the 299, it was actually a blessing that it happened to be Major Hill. Because his death was difficult to ignore, it wouldn’t be in vain; a rookie crashing the plane would have been easy to dismiss as a lack of experience. You can just imagine the conclusion of that hypothetical incident report: “…we recommend more training: only experienced pilots should be flying complex airplanes like the 299.” Thank goodness those test pilots dealt so honestly with the fact that Major Hill was an expert and didn’t simply recommend more preparation.

Gawande notes that the adoption of the checklist came when the aviation industry’s designs had reached a tipping point in terms of complexity. In addition to that, I feel there are some special aspects of aviation that made this leap inevitable. Flying airplanes is an endeavor where human failures are made brutally plain because their effects are so deadly. A person sits conspicuously at the controls, making the link between erroneous actions and bad outcomes readily apparent: either a safe landing and a “see you tomorrow,” or a massive fireball with coverage on the 10 o’clock news.

All human action is worthy of our scrutiny for betterment but, in other fields, the link between human error and bad outcomes may not be as readily apparent as it is in aviation. Deaths from airplane crashes aren’t any more tragic than those stemming from other kinds of poor judgement, yet I think we may be willing to give a “free pass” to mistakes in other fields. Evidence for this is the average number of annual aviation-related deaths: they have never exceeded more than 5,000 in any given year worldwide (and for most years it’s around 1,000). Contrast that to what Gawande found about the impact of human error with regards to surgery:

Avoidable surgical complications thus account for a large proportion of preventable medical injuries and deaths globally. Adverse events have been estimated to affect 3–16% of all hospitalized patients, and more than half of such events are known to be preventable. Despite dramatic improvements in surgical safety knowledge, at least half of the events occur during surgical care. Assuming a 3% perioperative adverse event rate and a 0.5% mortality rate globally, almost 7 million surgical patients would suffer significant complications each year, 1 million of whom would die during or immediately after surgery.

“WHO Guidelines for Safe Surgery” 3

Those are unbelievable numbers. You can see from these statistics that the opportunity to improve our lives by reducing the impact of human error is substantial. That sets up the narrative arc of The Checklist Manifesto: taking the checklist’s successes in other fields (aviation, construction, etc.) and applying them to medicine. Shortly, we’ll do likewise and apply the checklist to troubleshooting.

Coming To Terms With Human Fallibility

It’s clear that Major Hill’s death forced his fellow test pilots to make a tough realization. When the most respected, talented, experienced, and trained person dies attempting a task, where does that leave the rest of us? The implications for the average person, trying to do the same job, aren’t good. Cue the scary music.

When we’re talking about machines like airplanes or respirators, a single detail can be the difference between life and death. Or, less dramatically for other machines, between working and not working. That reminds me, I didn’t tell you the cause of the crash of Major Hill’s Boeing 299. I wish I could say it was some exotic failure, but the reason the plane crashed was easily preventable. On most airplanes, there is a system to lock the control surfaces (rudder, ailerons, and elevator) when not in use. This mechanism is called a “gust lock” and it prevents these all-important parts from being damaged by the wind when sitting on the ground. Of course, the ability to freely move the rudder, ailerons, and elevator is vital to controlling the airplane when in flight, so the locks must be disengaged before takeoff. Unfortunately, this critical step was missed:

From the evidence submitted the Board reached the conclusion that the elevator was locked in the first hole of the quadrant on the “up elevator” side when the airplane took off, for had the elevator been in either of the “down elevator” holes on the quadrant or the extreme “up elevator” hole, it would have been impossible for the airplane to be taken off in the former case, and in the latter case the pilot could not have gotten into the seat without first releasing the controls. With the elevator in this position they are inclined at an angle of 12.5 degrees.

During the take-off run the airplane could not assume an angle of attack greater than the landing angle of the airplane (7.5 degrees) plus the angle of incidence of the monoplane wing to the fuselage (3 degrees) or a total angle of 10.5 degrees. This would not be particularly noticeable to the pilot during the ground run.

However, as soon as the airplane left the ground, which several witnesses testified was in a tail low attitude, the elevators, with increasing power, varying as the square of the air speed (approximately 74 miles per hour at take-off), tended constantly to increase the angle of attack, until the stall was reached. The trim tab on the elevator also tended to aggravate this extreme tail heavy position, since with locked elevators, and the pilot pushing forward on the control column, the trim tabs were up, and themselves acted as small elevators on the fixed elevator proper.

National Museum of the US Air Force, Model 299 Crash Fact Sheet 4

We’ll never know for sure why the gust locks were left engaged on that fateful morning of October 30, 1935. Was Major Hill nervous about the demonstration flight? Was he distracted at that key moment in his routine when he normally disengaged them? Or, perhaps the thought just never crossed his mind (or his co-pilot’s) that day, a forgetfulness of which we’ve all been guilty.

The rub is, for those critical steps (like disengaging gust locks), the airplane has to be set up the right way, all the time. That one time you forget will be your last. Luckily, most missed steps don’t result in death, but that doesn’t lessen the number of things that need to be gotten right to make our technological civilization chug along every day.

Managing Complexity

We may have evolved with the natural world, but it still has mysteries that our reason hasn’t penetrated. Even our best mathematical models, running on massive supercomputers, can only predict the weather 6 days out. On top of that, we’ve been busy creating things that rival our natural environment in complexity. However, the specific ability to fly airplanes, manage a team building a skyscraper, or perform surgery simply weren’t a selective factor in our evolutionary history. There was no equivalent to “perfectly execute these 30 steps in the same sequence every time” as our species was grappling with surviving in the wilderness over the millennia. On the savannah, if 747s and computers had been ubiquitous as Woolly Mammoths and Saber-toothed Tigers, there’d probably be no need for the checklist! Forgetting to disengage the gust locks on an airplane puts you in the same amount of danger as getting too close to a steep ledge or staring a lion in the face, but it won’t automatically invoke a primal fear-based response in the same way. Dangers from machines must be learned.

Of course, we have learned how to master complex tasks on a consistent basis: to fly airplanes, to build skyscrapers, and to perform life-saving surgeries. In order to do these amazing things, we bolster our innate abilities with research, training, procedures, teamwork, and experience. However, as the Boeing 299 crash showed, along with thousands of other disasters before and after, this is not enough. As valuable as preparation is, we still need a safeguard against our vulnerabilities at the moment of execution. Being distracted, forgetful, tired, or stressed can negate years of training when it results in a critical step being missed.

Connecting The Checklist To Troubleshooting

There are two juicy opportunities to incorporate checklists into your fix-it routines:

- As a means to guide the problem discovery or repair phases. That is, using a checklist while troubleshooting.

- By transforming what you learned while making a repair into recommendations for the best way of doing something in the future. That is, using checklists to prevent failures from recurring.

Checklists As A Repair Guide

We’ve talked about various ways to formalize and communicate your troubleshooting knowledge, such as the venerable “troubleshooting tree.” Troubleshooting trees are good for situations where there are a lot of “if X do this, but if Y then do this instead” branches. Trees easily communicate an extraordinary level of complexity with respect to the discovery phase of the fix-it process. I’ve seen dense troubleshooting trees that look like circuit diagrams when viewed from a distance.

Just as often, you’ll have troubleshooting recipes that are simply a series of steps that must be done in a particular order. While the more open-ended problem discovery phase may be better suited to trees, the repair/correction phase is usually linear and therefore well suited to a checklist. In my company, we used troubleshooting checklists for recovery situations, like restoring a database. These procedures would have so many steps, needing to be done in the correct sequence, that making a checklist was essential.

Checklists As Preventative Medicine

If you’ve fixed enough things, the thought that “This was preventable!” is bound to cross your mind at some point. Even more so if you are consistently scrutinizing your breakdowns with a formal method like 5 Whys. Checklists are appropriate for any human-machine interaction, especially for those situations where the person at the controls can prevent something bad from happening by altering their behavior.

- For operators: when I say “operators,” I mean anyone who uses machines for work, the people actually getting the job done. Of course, this is a huge class of people: every organization has a broad section of their employees that utilize machines, big and small, to accomplish their work. There’s usually a right way and a…less than right way to operate a machine, but checklists go beyond education of “do’s and don’ts.” Training may improve awareness, but there will be situations where you need something done the right way every time. If you’re encountering failures that could be prevented through an enforced procedure, the checklist is a great match. Consider physically attaching a checklist to a machine so that it gets used every time.

- For designers: since this section is about prevention, let’s go all the way back to the people who are responsible for creating machines. Many firms make machines or tools that are used internally: manufacturer and consumer all in one. I’ve worked in software, where this is especially true: we’d often write programs for our own purposes, as well as for external clients. When you make your own tools, you will inevitably discover their flaws when they fail. Be sure to learn from these incidents by pushing that knowledge all the way back into the design process. Consider a “design principles checklist”: we had one for our programmers that asked them to consider things like security and performance when developing a new feature or fixing a bug.

Doing The Right Thing…Automatically

For all the benefits of the checklist, it still requires vigilance. What good is a checklist if it’s not enthusiastically used every time? Therefore, it helps to have a workforce that is educated on its benefits. But education may not be enough to force adoption, so the checklist will likely need management support as an official policy. Teams must be trained to use the checklist as a point of collaboration, and the organizational culture must tolerate challenging superiors when an item is missed. And so on and so forth: you can see the checklist benefits from an ecosystem that is favorable to it sustained use.

The organization that has integrated the checklist is an advanced species, but I believe there is another level beyond that, where you use automation and alter workflows to mitigate the need for checklists. Let me give you an example that shows this transition: when Discovery Mining first launched, I was the de facto systems administrator. Setting up a new server, I would do everything by hand: build the computer, install the operating system, partition the hard drives, set the networking parameters, choose the administrator password, load the correct software packages, etc. As we continued to grow, the number of servers also grew. Because I was doing everything by hand, every server turned out a little bit different. I’d eventually catch these discrepancies, but they would often lead to problems, and sometimes even an outage of our service!

To correct the situation, I made a checklist: I wrote down all the steps for setting up a server. Following the checklist whenever I deployed a new server dropped my error rate substantially. Also, it was now easy to get help for this task when I finally hired our first systems administrator. Using the checklist, we set up a few servers together. With checklist in hand, he was able to take over from there. By the way, this is a good example of how checklists—or any documentation—can be the basis for collaboration and delegation.

The checklist worked well and was far superior to the “artisanal” phase that came before: errors stemming from incorrect server configurations dropped dramatically. However, it wasn’t perfection. Forgetfully, sometimes the checklist wouldn’t be used. Even when it was, occasionally a step would be missed. Also, now we were really growing fast and we might need to install 20 new servers in a single day. People would try to set up multiple servers at a time and get confused as to where they were in the checklist. We needed a new solution for this “high-volume” era and we found it in the world of Configuration Management (specifically, we used a software package called Puppet). Once Puppet was deployed on our servers, we specified the ideal configuration and the configuration management software would enforce it everywhere in our infrastructure. The software even protected against errors after installation: if a systems administrator came along and made a change to a server that violated the established policy, it would automatically be rolled back and made right. Beautiful.

From then on, I was fanatical about having our systems “do the right thing” on their own. If you’re using a checklist to ensure a tricky sequence of events goes correctly, you have to ask yourself, “Why is it so hard for people to get this workflow right? Why have we designed something that is so prone to failure that it requires a checklist?”

It’s a fair question and may lead you to some big improvements. Going back to the story of the origin of the checklist, we can push ourselves and think of ways to do better. Can we add another layer of automated safety so that the checklist isn’t the only thing standing between life and death? What if that Boeing 299 had a single button that adjusted all of the plane’s settings to “takeoff” mode (including disengaging the gust locks)? Or, a warning system that would blink and beep if the engines were started while the gust locks were still engaged? Better yet, perhaps the ignition system could be wired so that it’s impossible to start the engines if the gust locks are engaged. Or, what about a backup system that would automatically disengage the gust locks if the plane exceeded a certain speed?

If you combine these kinds of improvements with the corrective power of the checklist, any task can be done safely.

Saving Lives

The final chapter of The Checklist Manifesto is a gripping account of the checklist actually saving someone’s life. This time it is Gawande himself who sees the benefits first-hand, although he was initially reluctant to introduce it to his operating room:

But in my heart of hearts—if you strapped me down and threatened to take out my appendix without anesthesia unless I told the truth—did I think the checklist would make much of a difference in my cases? No. In my cases? Please.

Atul Gawande, The Checklist Manifesto 1

Although I have readily advised others to use them, I’ll admit that in the past I’ve also been reluctant to personally adopt process-related constraints on how I work. Like Gawande, I used to think that, because I’m good at what I do, using something like a checklist was beneath me.

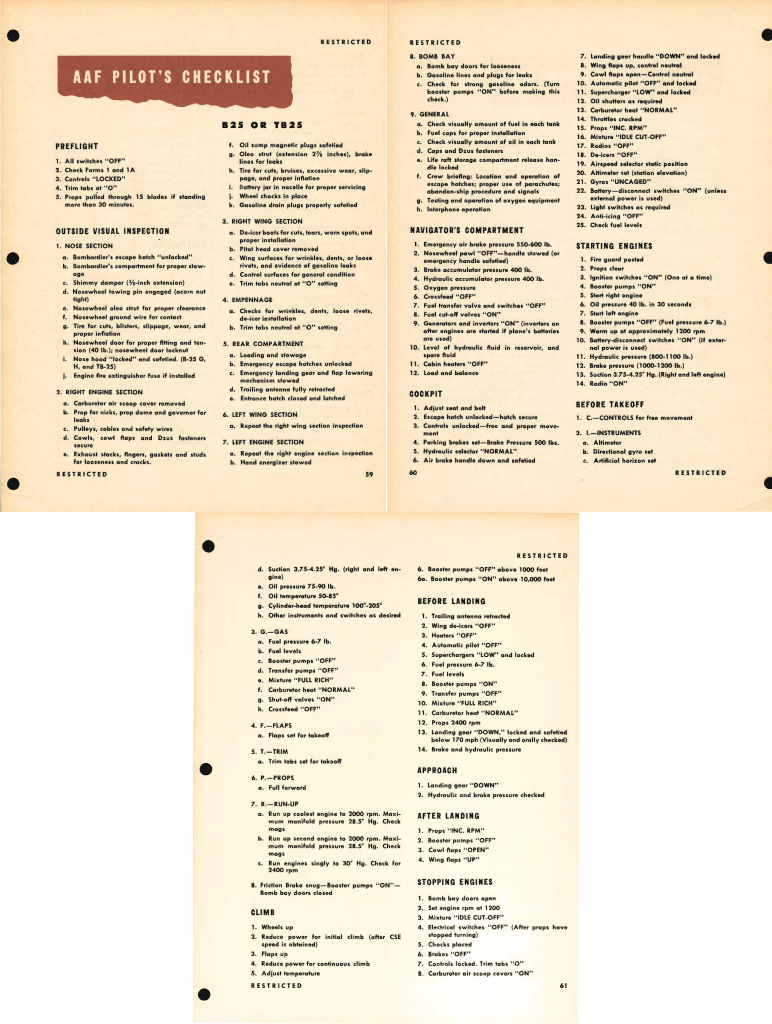

But, I’ve been humbled too many times to think I’m above the checklist. Even with vigilance, talent, and experience, you will make mistakes. As a pilot, I’ve seen first-hand the value of checklists. I’ve flown the Cessna 172, a relatively simple airplane (at least compared to a modern airliner). Even so, the 172‘s checklist contains over 100 items! Here’s the checklist for the B-25, a WWII-era bomber. As you can see, there’s a lot to get right:

(image: U.S. Army Air Forces – Office of Flying Safety / Internet Archive)

Do you think you could remember all this all the time? Me neither! But you know that every item on that checklist has been the source of trouble, perhaps even a fatality, for some unfortunate pilot.

Let’s return to Dr. Gawande’s close call. During surgery one day, he accidentally makes a tear in a patient’s vena cava (one of the large veins that carries blood into the heart):

But we had run the checklist at the start of the case. When we had come to the part where I was supposed to discuss how much blood loss the team should be prepared for, I said, “I don’t expect much blood loss. I’ve never lost more than one hundred cc’s.” I was confident. I was looking forward to this operation. But I added that the tumor was pressed right up against the vena cava and that significant blood loss remained at least a theoretical concern. The nurse took that as a cue to check that four units of packed red cells had been set aside in the blood bank, like they were supposed to be—“just in case,” as she said.

They hadn’t been, it turned out. So the blood bank got the four units ready. And as a result, from this one step alone, the checklist saved my patient’s life.

Atul Gawande, The Checklist Manifesto 1

Reading this for the first time was very moving—if you’re a process aficionado, it doesn’t get more beautiful than this. Of course, I highly recommend reading The Checklist Manifesto for the whole story, including how the nascent adoption of the checklist in medicine has significantly reduced surgical complications. Also, take a look at Gawande’s companion “Checklist for Checklists,” which outlines the principles for creating a really effective checklist.

But, you don’t need any more preparation, you’re ready to begin working with checklists right now. I hope I’ve made the case for how simple yet powerful they are! When you start using them, you too can experience the kind of payoff Dr. Gawande had in his operating room, of a crisis averted and a job well done.

References:

- Header image: “STS-96 mission specialist Ellen Ochoa beside the Volatile Removal Assembly Flight Experiment (VRAFE) located in the Spacehab DM during the flight. Ochoa is floating upside down beside the module and is holding a checklist.” June 2, 1999. NASA. Retrieved from Flickr, https://www.flickr.com/photos/nasacommons/29863224741/.

- 1 Atul Gawande. The Checklist Manifesto: How To Get Things Right, (New York: Metropolitan Books, 2009), pgs. 18, 187, 191.

- 2 Walter J. Boyne, “The Checklist,” Air Force Magazine. August, 2013.

- 3 “WHO Guidelines for Safe Surgery” (First Edition, 2008), World Health Organization, pg. 8.

- 4 Model 299 Crash Fact Sheet. National Museum of the US Air Force.