The Web as I envisaged it, we have not seen it yet. The future is still so much bigger than the past.

Tim Berners-Lee

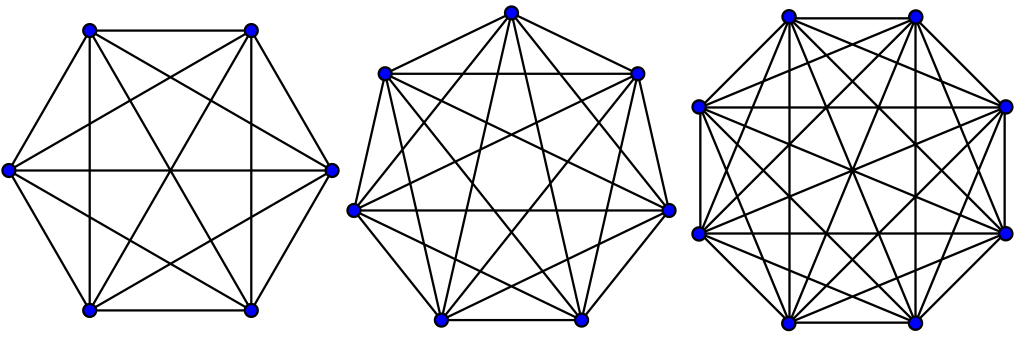

It all started with just one:

Pretty lonely, huh? Then another showed up:

That’s better. A third joined in:

A fourth and fifth too:

Then six, seven, and eight really opened up the possibilities to connect:

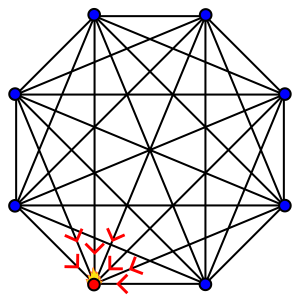

Then, one got a little too popular and things started to heat up:

It’s a familiar story…

Counting Connections

Highly interconnected systems are among the most important of our modern industrialized civilization. To name just a few examples: roads, the Internet, pipelines, electrical grids, financial markets, and waterways. If you somehow don’t rely on those and think you’re not involved, we should also include human organizations too: businesses, clubs, governments, churches, families, and social circles. You will see how the growth of the connections within these networks are a double-edged sword: they simultaneously make a system more useful and increase the likelihood of congestion.

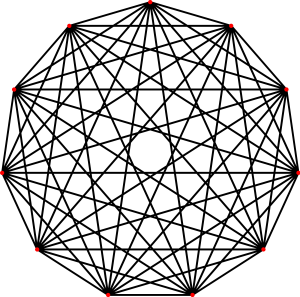

Let’s start the discussion by introducing the Complete Graph, a powerful model whose form I’ve encountered again and again in so many different contexts. Whatever kind of systems you troubleshoot, there’s likely a portion that resembles this form. The Complete Graph is a mathematical term for a model where every vertex is connected to every other vertex (mathematicians call these connections “edges”). Visually, they look like the images in the introduction to this essay. Here’s a Complete Graph with 11 vertices:

The number of connections in a Complete Graph can be described mathematically using the standard formula for combinations (valid for n > 1):

n! / (n-2)! × 2!

Alternatively, this can also be expressed as:

(n2 – n) / 2

You can see that there is an exponential factor (n2) in this formula, which will come to dominate its scaling as n grows. In other words, the total number of connections will expand much quicker than the number of vertices. How fast will the edges grow? Let’s look at some numbers:

| # of Vertices | # of Edges | Ratio (E::V) |

| 1 | 0 | 0 |

| 2 | 1 | 0.5 |

| 3 | 3 | 1.0 |

| 4 | 6 | 1.5 |

| 5 | 10 | 2.0 |

| 6 | 15 | 2.5 |

| 7 | 21 | 3.0 |

| 8 | 28 | 3.5 |

| 9 | 36 | 4.0 |

| 10 | 45 | 4.5 |

| 100 | 4,950 | 49.5 |

| 1,000 | 499,500 | 499.5 |

| 10,000 | 49,995,000 | 4,999.5 |

| 100,000 | 4,999,950,000 | 49,999.5 |

| 1,000,000 | 499,999,500,000 | 499,999.5 |

The important thing to notice in the table above is the growth in the ratio of edges to vertices. The reason why this matters is, in any networked environment, the vertices are typically not equal, nor are the connections among them random. If the Complete Graph represents the people working in a corporation, only one is the CEO; if it’s a network of roads, there might be only a few streets that go into a giant stadium’s parking lot. Therefore, a theoretical understanding of network effects is usually paired with the Pareto Principle, which you might have heard as the “80/20 rule.”

The economist Vilfredo Pareto noticed that 80% of the land in Italy was owned by about 20% of the population. Pareto surveyed other countries and noticed a similar distribution of ownership. Since then, this same pattern has been observed in countless other contexts from sales (“80% of sales at company XYZ comes from 20% of its customers”) to health care spending (“20% of patients consume 80% of healthcare resources”).

Now, there’s nothing magical about the numbers 80 and 20, they’re just representative of the Power Law distribution. Visually, it looks like this:

(image: Husky / Wikimedia Commons)

The power law is found everywhere in nature*, from the magnitude of earthquakes to the size of craters on the Moon. When it comes to human-based activity, we find the form repeated in such wide-ranging contexts as contributors to Wikipedia and the population of cities. For my first “real” job after college, I was hired by a company called Alexa to analyze data on how people were using the Internet. There, I discovered the power law by accident: I remember graphing the distribution of traffic amongst the top websites, messing around with the scale of the axes. I changed them to logarithmic and suddenly the relationship turned into a straight line!

I stood back and said, “whoa.” I didn’t know what it meant, but I thought that a straight line was a bit weird. There was an engineer in another department who had a PhD in physics, so I printed out the graph and marched over to his office. He looked at it, paused, and said “Power Law. Look it up.”

I did.

(*If you’re interested in learning more about the Power Law and lopsided distributions, see my study “Tube Of Plenty: Analyzing YouTube’s First Decade,” where I analyzed the metadata of over 10 million YouTube videos.)

(image: Russell Lee / Library of Congress)

Call Me, Definitely

Before we get into the downsides of interconnected systems (and hence the need to troubleshoot and optimize them), we should first recognize their immense benefits: being able to deliver information, money, fuel, water, electricity, packages, or people between arbitrary points is a huge benefit to humanity. Most of the stuff that sustains our lives flows through networks of some kind.

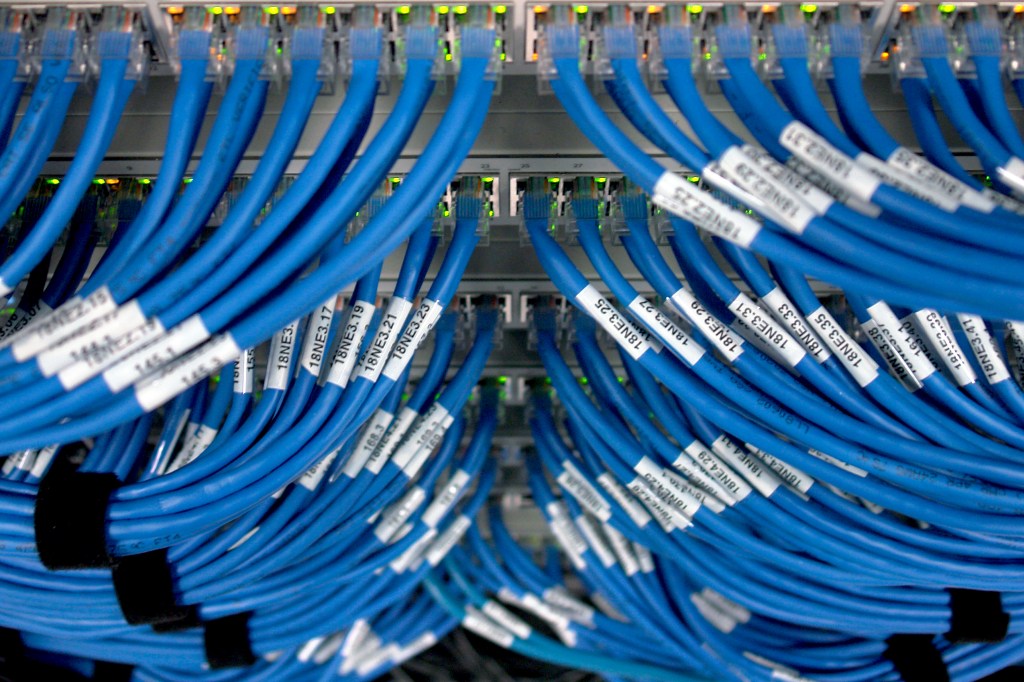

One of the widely cited theories about why networks become more useful as they grow is Metcalfe’s Law, which states that “the value of a telecommunications network is proportional to the square of the number of connected users of the system.” Metcalfe is the co-inventor of the ubiquitous Ethernet networking standard, which is the foundation for most of the data networks in existence today. Some people have criticized the way Metcalfe’s Law has been used, and likewise that Bob Metcalfe’s own formulation and intent was narrower (after all, maybe he was just trying to get people to buy Ethernet gear). Also, the “value” the law cites isn’t given in any measurable terms.

However, I think the underlying principle behind Metcalfe’s Law is easily understood and useful. Imagine if there were just 2 telephones in the world: sure, they would presumably be useful to the 2 lucky people who had them, but it’s nothing compared to what you can do if there are billions of phones. You can order a pizza, alert someone you’re going to be late, book a hotel, ask out that good-looking girl or guy, or get a taxi to pick you up. What you can accomplish with a phone increases dramatically when everyone you want to communicate with has one. Plus, each new phone that joins the network makes the entire system even more beneficial, allowing more potential contacts to happen.

Other Ways To Connect

There are also counter-trends that frustrate the value and existence of networks that try to expand indefinitely. The “value” isn’t the only thing that increases with the number of interconnections: congestion, maintenance costs, fraud, theft, and a whole host of other negative effects are also likely to appear. Even though an “any-to-any” concept of a network may be marketed to end-users, scaling becomes its own challenge because the actual implementation requires compromises between connectivity and costs.

For example, my computer might theoretically be able to contact every other device on the Internet (just like the Complete Graph would suggest). However, there isn’t a cable going from my computer to every other computer on the planet: the Complete Graph has little to do with how the Internet is actually constructed. This is because the Complete Graph is a very expensive model to actually use when building a large interconnected system.

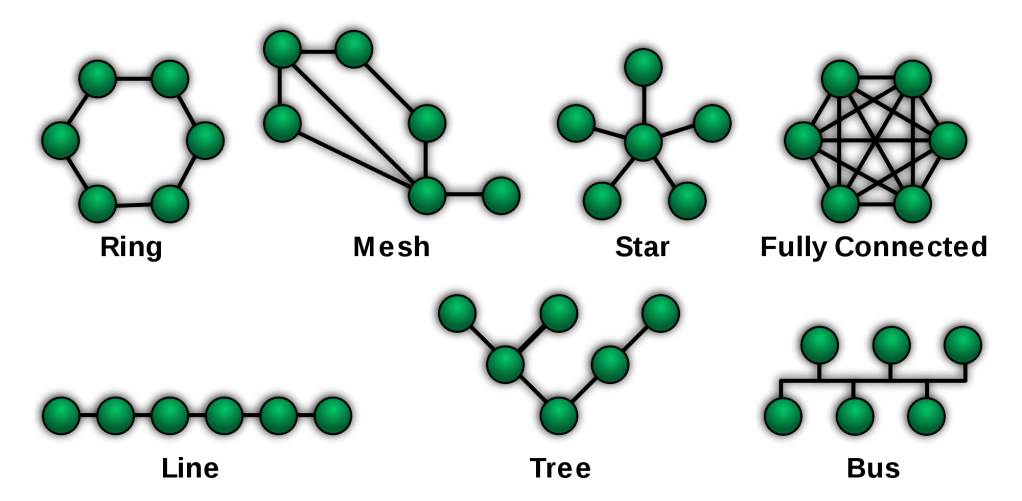

On that note, let’s introduce some of the more common topologies that you’ll see when observing networks in the wild:

Let’s go through each of these network types, thinking about how they solve certain kinds of problems and find examples in the real world:

Ring

- Pros: each node has two options for egress. Should one of the links be severed, connectivity between nodes is still possible by traversing the network in the opposite direction.

- Cons: each node is part of the network’s path. If a node or conduit malfunctions, the throughput of the network is immediately halved and the result is a line network.

- Examples: one of the ISPs I used for business had a ring network connecting all of their data centers in the Bay Area. If one of these connecting links went offline, data would continue to flow around the ring in the opposite direction.

Mesh

- Pros: multiple pathways through the network improves redundancy and throughput.

- Cons: additional interconnections come at a price. Also, because each node can potentially be used as a conduit, automated smarts are needed to make the network resilient and efficient.

- Examples: a popular model for wireless networks, because adding additional links between nodes only requires proximity (i.e., there are no cables to lay). Meshes are harder to implement for physical networks, because of the increased cost.

Star

- Pros: centralized control and isolation of nodes.

- Cons: the center node is an obvious point of congestion, as all nodes must use it to reach any other point on the network.

- Examples: the hierarchical relationship of employees to their manager is a type of star network.

Fully Connected (aka, “full mesh”)

- Pros: this model wins the award for redundancy! Because every node is directly linked to every other node, the removal of one will have no impact on material attempting to traverse the remaining paths.

- Cons: expensive to implement, with exponentially rising costs as the network grows. Also, because each node can be contacted directly by all the others, congestion problems can show up at any node. This is in contrast to more conventional networks, like the star topology, where the predictable choke point can be strengthened.

- Examples: large, fully connected networks are extremely rare in an industrial context (because of their cost). However, you have direct experience with them. Most people belong to social networks (like their immediate family or a tight-knit group of friends) that operate like a fully connected network.

(image: Carol M. Highsmith / Library of Congress)

Line

- Pros: like the ring, every node can pass material in either direction. Also, because each node only needs to be connected to the next closest node, this can be an inexpensive network to implement.

- Cons: every node needs to be operational to pass material through the system from end-to-end. Also, because there is just a single path of flow, the severing of a link will cut the network in two parts.

- Examples: oil pipelines. Or, a particularly hilarious application you might have played at a party: the “telephone game.”

Tree

- Pros: combines the star form with other topologies, leading to more manageable clusters of nodes, which then connect to other parts of the network via trunks.

- Cons: like any model that uses single links to connect parts of the network, a severed trunk connection will leave the network fragmented.

- Examples: hybrids like the tree network are the model most likely to be found in real-world use. Most organizations that create their own networks will (eventually) end up with some kind of tree structure. An example that immediately comes to mind is any large airline’s route map (my favorite part of any in-flight magazine), usually having several hubs (“spoke and wheel” star networks) that are connected by flights between them (trunks).

Bus

- Pros: this might be the cheapest of all the network types to implement. Links only need to make it to the nearest part of the shared bus to connect to the network.

- Cons: like the line network, a shared conduit limits throughput. Severing of the bus at any point can leave the network split in pieces.

- Examples: early Ethernet networks using 10BASE2 technology.

(image: Andrew Hart / CC BY-SA 2.0)

Too Many Requests

A large-scale concert on sale is, in essence, a denial-of-service attack.

Andrew Dreskin, Ticketfly CEO

There’s a downside to all this interconnectedness: what happens when the whole world shows up at your doorstep? When it comes to scaling networked systems, it may be easy to add a node by redrawing a graph on paper, but your actual implementation may not be so easily adaptable. The process of taking an abstract idea about how a network should function and putting it into physical form inevitably includes compromises about capacity. If there were no tradeoffs or cost, you’d gladly have your house plumbing sized to run a large waterpark, or your Internet connection transfer at the rate of a gazillion bits-per-second. However, capacity isn’t free and so every chosen network implementation must reconcile our infinite desires with our finite means.

A note about “troubleshooting” network problems: relieving congestion by adding resources or changing network models can easily cross over into the land of engineering and invention. Recognizing the distinction isn’t a slavish devotion to semantics, but is instead about recognizing the limits of your equipment. Sometimes, a piece of gear can be operating exactly as designed and still won’t be able to handle the load generated by the network to which it is attached. In these cases, the machine isn’t broken, it’s just being used in a context that isn’t productive. What has likely changed is the circumstances surrounding its use (often, the volume of stuff being sent through the network).

The pure “troubleshooting” answer to network model problems would be: the system wasn’t designed for this level of usage, so you should decrease usage. You may think this is an unsatisfactory answer, but this is actually a bona fide solution. I’ve seen smart business owners do this, refusing work rather than inviting the chaos of running at overcapacity. I always appreciate when a maître d’ at a busy restaurant simply turns me away, rather than seating me for a never-ending meal à la Waiting for Godot.

People do this in their personal lives all the time: there’s simply a limit to the number of close social connections you can maintain. Partly, this is because maintaining relationships takes the scarce commodity of time, but there might be another limitation: our minds. Robin Dunbar, the Oxford anthropologist and creator of the eponymous Dunbar number, accurately predicted the size of various primate social groups by looking at the size of their brains. Using this method, he then turned his sights on us, predicting that humans would have social circles of approximately 150 people:

The essence of my argument has been that there is a cognitive limit to the number of individuals with whom any one person can maintain stable relationships, that this limit is a direct function of relative neocortex size, and that this in turn limits group size.

Robin Dunbar, Co-evolution of Neocortex Size, Group Size and Language in Humans

These mental limitations to our social circles appear to hold even in the era of FaceBook and Twitter, with Dunbar noting that “when you actually look at traffic on sites, you see people maintain the same inner circle of around 150 people that we observe in the real world.” While we may have to tacitly accept these limitations in our social lives, the profit motive and the desire to constantly reach for new heights makes us rarely satisfied with the troubleshooting answer of “do less” in other contexts. For a business owner, it will gnaw away at you knowing that there is business out there that you can’t satisfy. This compulsion drives us to grow our networked systems by swapping out the underlying model with something more suitable.

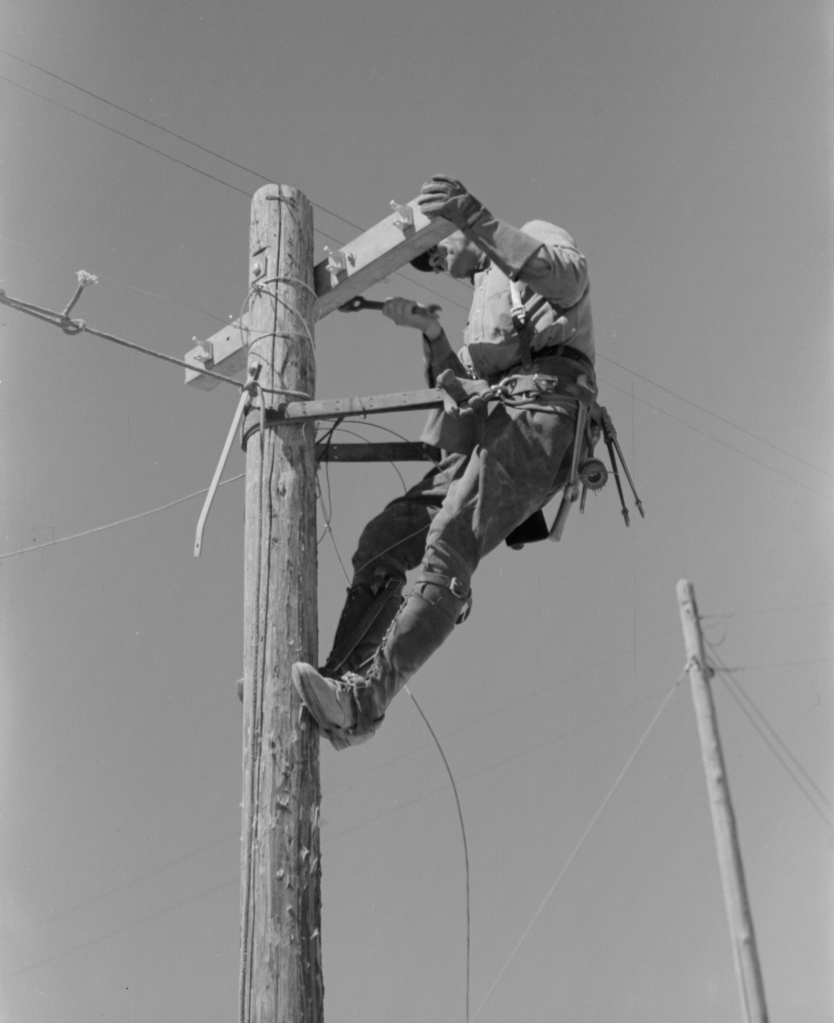

To prime our thinking about scaling networks, a good place to start is with the public switched telephone network. Let’s consider the Central Office, a building block of the phone system (still in use): this is the physical place where all the phone lines in a locality are terminated. There are practical limits to the length of phone cables, both because of the signal degradation that sets in over longer distances, as well as the expense of stringing them along poles or through underground conduits.

Therefore, you’d expect that the size of a typical Central Office (CO) would be limited by these geographical and economic constraints. You’re not going to be able to have a single CO for a whole country (except for maybe Monaco or the Vatican). If you were laying out a new city, you’d need to plan to have a certain number of these facilities: X number of COs per Y square miles of land. You can also predict that the type of equipment deployed in a CO would be of a certain scale, designed perhaps for thousands, but certainly not millions of incoming lines. Why? Again, economics. The industry will consolidate around certain implementations and designers will take advantage of these economies of scale. Put another way, if everyone else is building COs of a certain size because the available equipment favors it, then you will too.

While many systems use the abstract network models shown above, seemingly flexible and conducive to scaling to any number of nodes, their in-the-flesh implementations will typically only function within a fairly narrow ratio of edges to vertices. The problem is that networked systems are deployed in contexts that are ever-changing: the ebb and flow of businesses, organizations, families, cities, and states.

Scaling Inside The Existing System

To understand the scaling of networked systems, let’s start with the low-hanging fruit of their built-in capacities. Think of a simple electrical power strip with, let’s say, 6 outlets:

Conceptually, when you plug devices into a power strip you form a star network. Plugging in the power strip itself then creates a tree network. The scalability is built-in, yet limited: 1 to 6 devices can be attached to this particular power strip. Let’s say you buy one and plug in your laptop, monitor, printer, scanner, modem, and router. It’s a full house!

Then, you go on a shopping spree and buy a stereo and a space heater. Hmm…you can see this presents a problem. Previously, we made a choice that lead to a purchase and the result was a fixed amount of scalability. Even though a power strip might be a star network in the abstract, you can’t just draw lines and magically have more outlets appear. Maybe you buy a second power strip, raising your office capacity to 12 outlets. That’s great, but this method of scaling can’t continue indefinitely. The power strips themselves are plugged into a circuit that has a fixed capacity.

From this simple example, you can see that networked systems, when actually implemented, will have these scaling thresholds (or “cliffs,” should you be pushed over them). There will usually be a range within which it’s easy to scale, but after that it will require more resources. Beyond that, you will eventually come to another breaking point where adding more of the same will not be enough to increase capacity. At that point, you’ll need to rethink the underlying model.

(image: Library of Congress)

Scaling Outside The Existing System

Human-based organizations are a great example of how network models need to be discarded and remade, especially during a period of growth (or decline, as the case may be). What works for a solo enterprise will not work for 10 people, what works for 10 people won’t work for 100, what works for 100 people won’t work for 1,000, and so on. I’m not saying that the network model exhaustively explains every reason why, but it is a powerful framing of the problem.

For very small groups, it may be possible to have a “fully-meshed” network model. Think of a small business, like the auto repair shop my Grandfather used to run. It was just he and a partner. With only a few people working together, it’s definitely possible for everyone to interact with everyone else. If you share the same physical workspace, you can be privy to the same information and develop a meaningful personal relationship with everybody (and they with you), approaching the ideal of the fully-connected network envisioned in the Complete Graph model.

(image: Library of Congress)

A fancy piece of new equipment glistens in the sunshine. You may bask in all of its chrome-reflected glory. But, no matter how many rays are reflected, its capacity is finite. It’s also likely to be explicitly stated, usually somewhere in the manual (albeit in the small print). While these technical limitations are known and respected, our human ones are frequently disregarded (to our detriment).

For the sake of productivity (and everybody’s sanity), as a firm adds people it’s a crucial task to migrate to a structure that limits interactions between the parts of your organization’s network. The reason why is plain: our time and ability to focus is scarce. Imagine if you, a lone person, had to keep up with all the employee-to-employee interactions inside a group of millions, as in a mega-corporation like Walmart or McDonald’s. It simply wouldn’t be possible. It would be boring too. Like, really boring.

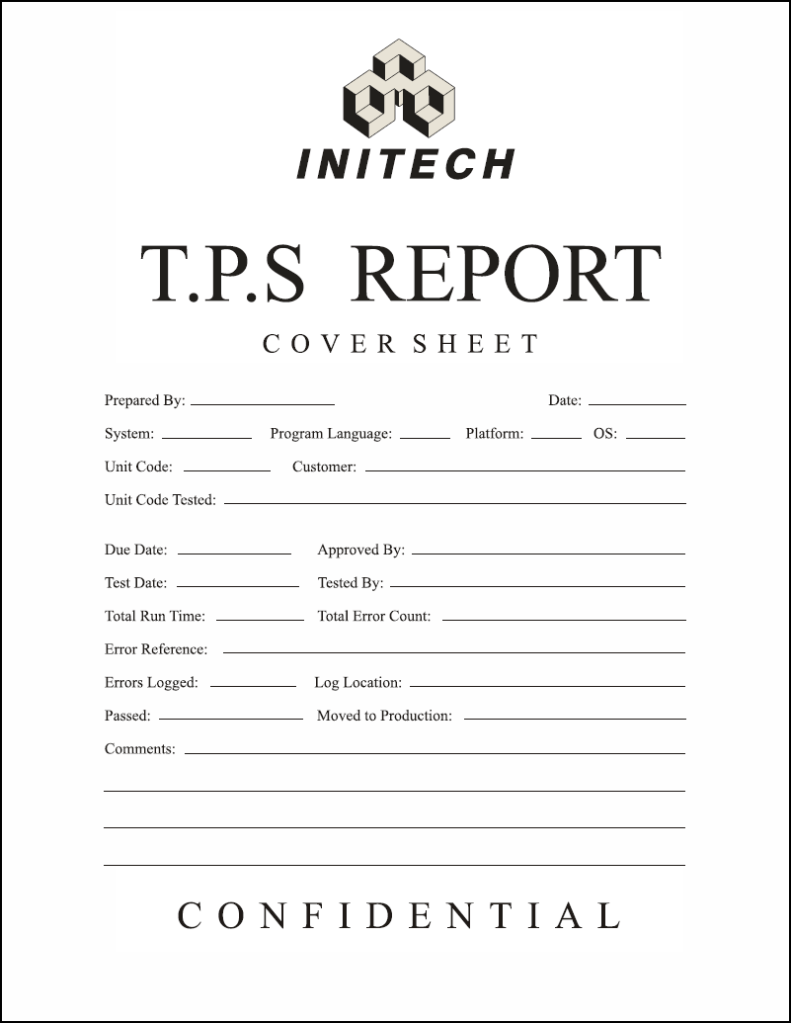

Isolation may sound like a bad word, but when it comes to your job it’s an absolute necessity for you to get anything done. This is one reason why companies create barriers of all sorts, from the physical (e.g., offices and cubicles) to the hierarchal (e.g., you can only access a particular person through their boss or secretary) to the procedural. Along the lines of this last category, I present the form:

(image: Don Meyers / CC BY-SA 3.0)

The very low-tech form is an example of using procedures to standardize how parts of an organizational network interact with one another. I’m not sure who invented the form, the kind which needs to be filled out in triplicate, but if they didn’t someone else would have. Before the venerable form appeared, people were getting requests verbally, scrawled on post-it notes, via emails, voicemails, smoke signals, in skywriting and interpretive dance. Having other things to do, all this free-form access from other parts of the organizational network probably got a bit tedious. Then the clouds parted and the form appeared. Even though the entire group may contact a single employee, those little lines provide protection when they do.

I’m not promoting any particular management structure (flat, functional, product, geographical, etc.), because isolation, hierarchies, and procedures each have their own problems. Further, implementing a particular system will also generate a host of unintended consequences: the end result may be superior, but that doesn’t mean that customers, vendors, and employees will all simultaneously be better off. However, you need to be sympathetic to the underlying argument for the existence and pursuit of these competing organizational systems: the limiting of unwanted network effects.

Growth will present scaling problems to your networks (both human and machine), exposing unsustainable relationships between edges and vertices. Just like our power strip, it may be possible to scale within the existing system. A single manager may well be able to increase his subordinates from 2 to 3, and again from 3 to 4. A router might be able to switch 1,000 mb/s of traffic just as well as 1 mb/s. However, there will come a time when the old ways of scaling will no longer be possible and you must change your network model.

(image: Russell Lee / Library of Congress)

The Physics Of The Network

Any time you centralize something, whether it be information, decision-making, or physical goods, there are going to be tradeoffs. As networks are often the means by which these things are concentrated, we should look at their role in these schemes and discuss the pros and cons of bringing things together.

When you move stuff around a network, you lose its original context. This becomes a problem for the transfer of information, especially the kind that is used to coordinate the actions of individuals within a group. If you’re a lowly army corporal standing in the general’s office and he gives you an order, several important things are quite clear: you know the general is speaking to you and the general knows with whom he’s speaking. If you’re brave enough, you can even ask for clarification.

But, let’s say you are that same corporal, thousands of miles of away in the middle of a heated battle, and someone hands you a piece of paper telling you what to do. At the bottom is the general’s name…but how do you know it came from him? Has the order been changed or delayed along its path? You can see that by removing context, networks present problems of trust and identity.

(image: John Vachon / Library of Congress)

Another interesting aspect of networks is that their capacities are often small in relation to the type of material they transmit. This is because it’s (relatively) expensive to move things around. Walking around San Francisco, I’ll often see a crowded street with cars taking up every possible bit of free space along the curb. I’ve wondered: “What would happen if everyone decided to use their car at the same time?” The capacity of our roadways is small compared to the number of cars in existence. To the extent they are usable in a crowded urbanity, we rely upon the vast majority of cars not being in use! Whatever medium you can think of (oil, information, cars), their corresponding networks (pipelines, the Internet, roads) will only be able to transmit a small fraction of the available material at one time.

When it comes to the movement of information on a network, in addition to any context that might be lost, an abbreviation must also take place. This has always been true: imagine that you were the commander of a far-flung outpost like Vindolanda, sitting on the edge of the Roman Empire’s frontier by Hadrian’s Wall. One day, you get a request from your superiors asking you for a report of the goings-on over the last year. There are nearly an infinite number of things you could include in your reply: enemy incursions, movements of troops in and out of the fort, levels of supplies, agricultural output, temperature, rainfall, births, deaths, disputes, details of social occasions, or the latest gossip about Agrippina and Plinius (they were seen kissing behind the Thermae!). But, those wooden tablets aren’t exactly easy to write on, plus the courier only has room for one in his pouch and he’s leaving in 15 minutes. So, you just scribble the following: “We’re all fine. Still alive. Send wine.”

Funnily enough, that’s probably all they could reasonably handle within the busy context of running a large empire. As a general rule, the nodes on the edge of a network can produce much more data than can be transferred, analyzed, and acted upon. There is an infinite amount to be measured within the simplest of systems and so, any time you collect data, you are gathering only a tiny fraction of what can be known (see “Is This Normal?” for more). Because of the expense of preparing the data and then moving it, centralizing information usually involves a further culling. The core can’t know everything about the edges, and the edges can’t know everything about themselves.

Lastly, we should note that centralization (via networks) is often pursued as a measure of control. Whatever it is you’re trying to protect (information, resources, etc.), the theory is that it will be easier to do so when it’s all in one place. The adage “don’t put all your eggs in one basket” neatly summarizes the potential downside of concentrating important things.

(image: btphotosbduk / CC BY 2.0)

Breaking And Making New Connections: Reforming Congested Networks

Whether we’re talking about Dunbar’s Number or the availability of open ports in a telephone switch, the common theme for system builders is: be aware of the scalability thresholds that limit the size of the networks under your care (and of which you are a part). When the easy options for expansion have been exhausted, here are some basic strategies for mitigating network problems:

Augment

This is the most obvious path to increase your network’s capacity and, because it’s along the lines of what you already know, will be the most tempting. If your Internet router is overloaded, you can buy one with more capacity. If a 4-lane highway is constantly jammed, you could widen it to 12 lanes. Whatever your network currently does, you can imagine replacing the component parts with ones that simply do more.

Prioritize

A network is a finite resource, so you should ask yourself if it is being used for the most urgent or valuable purpose. If a network is being utilized by customers, maybe you need to raise prices to bring usage under control. Toll roads are an easy to understand scheme that reduce congestion by giving access to those who value it most. It doesn’t have to be about the bottom line: whatever your organization deems important, you want to make sure that your networks are similarly aligned.

Re-model

This is where you alter the network model, adopting a new form that changes how the various nodes connect to each other. Modifying the ratio of edges to vertices can go in either direction: congestion may lead you to seek ways to lower this number, but efficiencies can allow you to raise it too. Consider an overloaded manager, connected to subordinates in a typical star network: a common strategy is to split up teams that are too large, appointing additional leadership for the new groups, lowering the ratio between employees and managers (effectively creating a tree network in place of the former star shape). You can also imagine this going the other way: maybe a new HR system automates many of the tasks that used to burden a particular manager (scheduling, reporting, etc.), leading to an increase in the number of employees under their direction.

Isolation and exposure are two more opposing themes that can drive the remodeling of your networks. We’ve talked about the need to limit access to nodes, especially when the context is an organization. There simply aren’t enough hours in the day for the CEO of a mega-corporation to be continually and directly accessed by everyone who works there. Even when you’re not the CEO, you probably have appreciated those jobs where you were left alone to focus on getting things done. Devolutions done in the name of isolation can transform star networks into trees, pushing nodes further away from the core.

Network reformations can also promote the opposite, exposing previously hidden nodes by increasing access to them. Maybe Bob from accounting is a little too reclusive, so you publish his telephone extension and office number in the employee newsletter. In computing, wireless mesh networks attempt to promote connectivity between nodes by allowing information to flow freely among them. Tear down those walls!

(image: Russell Lee / Library of Congress)

Standardize/Limit

Within a network, a way to preserve connectivity and reduce congestion is to enforce rules about how nodes connect to each other. Remember the mighty fill-in form: it all comes back to this idea of giving relief to overloaded nodes by placing restrictions on how others can connect to them. Standards for inputs ensure that a given node can process material in the most efficient manner. Another way to ensure a node can operate efficiently is to place limits on the rate it receives materials or instructions from elsewhere in the network.

Be Flexible

Network forms, along with their physical manifestations that we actually use to do work, all have a proper scale in which they can be used. A network’s context is constantly shifting within the ups and downs of your life and business. One model cannot satisfy your needs forever, so a fluid mindset regarding their use is paramount.

References:

- Header image: Trikosko, M. S., photographer. (1959) Women working at the U.S. Capitol switchboard, Washington, D.C. Washington D.C, 1959. [Photograph] Retrieved from the Library of Congress, https://www.loc.gov/item/2013651433/.

- The images of complete graphs used in this article are from the public domain collection on Wikimedia Commons (“Set of complete graphs”).

Regarding your fascinating discussion of isolation, perhaps instead of SCRUM we could go back to the way things were and be Data Processing professionals with budget, requirements and specs, and where we understood what status was and what progress was, however instead of waterfall we could follow Percona MySQL’s algorithm of synchronous multimaster transactions, where a transaction has to travel to all the master’s to get certified just before commitment. Then we wouldn’t have this transparency and collaboration that takes away from isolation and mental models, and instead there could be new conferences constantly hyping isolation and focus and insight instead. Instead of what we have now black box mgmt and testing, test automation and “continuous whatever” because the devs don’t have enough insight (too expensive) to prevent regressions 🙂 There is a line that QA and mgmt, and even devs will not cross and that is having insight–your machine model, this is why QA/test use of heuristics is actually quite timid when you look at it and is not the same as troubleshooting, it is black box, they don’t want insight.

LikeLike

I like your idea of an isolation conference. Would the attendees be allowed to talk to each other? Ha ha!

It sounds like you’ve had a mixed experience with Scrum and the rest of the Agile zeitgeist. In this article, I tried to convey the tradeoffs you encounter when moving between these various network forms. Their effects on a dimension like isolation<=>openness usually involve both pros and cons. Things like open floor plans, stand-up meetings, and pair programming are great at fostering collaboration and increasing awareness of what the team is working on. Using the network model, you could say that they promote connectivity between the nodes.

However, emphasizing one set of values usually diminishes another. A highly collaborative and open work environment might also be fatiguing for introverts (it’s noisier and requires a lot of social interaction), facilitate frequent interruptions, lead to a sense of constantly being monitored, etc. It’s easy for virtues to become vices when taken too far or applied in the wrong context. I guess the art of management is being aware of these tradeoffs as you try to find the best solution to the problem at hand…

LikeLike

Ha ha! and we can have our expectations prepared at the Panopticon Dev Asylum Anti-Summit whether Agile or old school 🙂

LikeLike