In the Big Idea, I laid out my thesis for The Art Of Troubleshooting: that “all machine problems are human problems.” Unfortunately, there’s a class of “human problems” that you need to be aware of, the deliberate and malevolent kind. We’re talking about things like fraud and sabotage. You need to recognize these as possibilities when you are out in the field solving problems.

The troubleshooting strategies I’ve presented have been discussed in a context you probably weren’t even aware of: that the people you are working with share the goal of having your systems operate smoothly. Sure, there may be personality conflicts, differences of opinion, attempts to be helpful that are ultimately misleading, or even some outright hostility if someone feels like their territory is being violated. However, at a base level, most of the people you interact with will respect your goal of getting a system running again. Even if they don’t offer to lend a hand, usually the worst that will happen is that they will be indifferent to your efforts. When it comes to your co-workers, a common goal is implicit in your relationship. After all, if they didn’t support the purpose of your organization, even at the superficial level of “it’s only for the paycheck,” they’d be working somewhere else!

When someone intentionally violates the tacitly held belief that “we’re all on the same team,” things are going to get weird—and ugly. Stopping a machine from working on purpose adds a wrinkle to the normal “A causes B” model that you’re used to relying on for troubleshooting. That’s because the “A” that causes “B” is typically an unintentional force (wear and tear, unforeseen consequences, acts of nature, etc.). If you’re not considering the possibility of intentional failures, you will be entertaining theories that belong in a Tolkien novel. The hands of a malevolent person can make things happen that look like magic.

(image: Charles O’Rear / The U.S. National Archives)

Failures And Judgements

The origin of every machine is mankind’s purposeful intentions: that’s ultimately the sense in which “all machine problems are human problems.” By now, our collective experience tells us that all machines eventually break down. Deploying a machine for a given purpose carries with it an unspoken assumption of failure somewhere down the road. Routine maintenance and periodic replacement are responses to this reality.

Even though we know that all machines will eventually fail, the how and when are often unexpected. Sure, you may know that your car can break down, but you didn’t know that it would gasp its final breath last Tuesday in the midst of rush-hour traffic. Unfortunately, the inevitability of your car’s demise does not give you specific knowledge of the exact date and time. Isn’t it interesting that we live in a world where it’s certain that every machine will eventually break down, and yet our experience of those failures is one of surprise?

Even a well-maintained system can unexpectedly malfunction, so we don’t judge a machine’s owner when that happens, at least not in a moralistic “you are a bad person” kind of way. If we rely upon a company’s services (and hence, their machines) and they are unable to make good on their promises, we may judge them as being incompetent for not having a contingency plan or sufficient redundancy. However, no one says Netflix is evil when they can’t watch movies on Christmas Eve. Again, the fact that the other party is acting with positive intentions and in good faith makes the difference.

Intentions Don’t Matter

Intentions may matter for moral judgements, but when it comes to repair, a failure is a failure. It doesn’t matter if the cause is a squirrel in the substation, normal wear and tear, a storm, forgotten maintenance, or sabotage, because the goal of fixing something is always the same: fulfill the need that the machine was serving.

When it comes to choosing strategies, the efficacy of a troubleshooting recipe is tied to the design of a system. The intentions (or lack thereof) behind a cause is not a factor. For example, “copying one that works” or quickly narrowing in via half-splitting will be effective, or not effective, based on the machine’s nature. Think of it like this: a cut needs the same medical care regardless of whether the knife pierced the skin accidentally or intentionally. Stitches, bandages, and antiseptic are used in either case: the reality of the wound is the primary consideration, not the story behind the injury. The broad strategies I’ve given you are useful precisely because they are applicable to a wide variety of failure scenarios, independent of cause’s origin. If you had to know the origin of a cause before you chose a strategy, troubleshooting would be difficult, given that the cause is frequently unknown!

Therefore, when fixing something damaged by sabotage, troubleshooting can proceed like any other case. However, there are some special considerations, especially during the “cleaning up” phase. At some point during a repair, every good troubleshooter asks, “Why did this happen? How can it be prevented from recurring?” These questions have special pitfalls with respect to deliberate failures.

Mistaking Intentional For Natural

Happily, most malfunctions are free of malice. They are unintended and happen in the normal course of people trying to complete their work. Many times, no human is even present during a breakdown; the machine is operating on its own when it dies, and the problem is discovered later. This is the bulk of our experience and we know that experience is a powerful guide. But this well-worn path can blind a troubleshooter to the possibility of sabotage, resulting in failures that look supernatural.

Be especially suspicious when troubleshooting anything related to the security of people or property. We’re talking about things like cash registers, safes, locks, lockers, and entryways (doors and windows). Likewise with their digital equivalents: firewalls, account credentials, virus scanners, etc. I’m always urging you to consider context when troubleshooting; awareness of the environment in which a system is installed is instrumental to finding causes. Up till now, that meant paying attention to things like upstream or downstream machines in a workflow, shared resources like electricity, or environmental variables like temperature and humidity. Now, we’ll add one last critical part of a machine’s context: the people who have access to it. What if someone doesn’t share the goal of having everything run smoothly?

Be sure to consider the entire ecosystem of which your systems are a part: attacks can come from customers, vendors, and the general public. Finally, there’s always the possibility of the “inside job,” where your own co-workers are up to no good.

Mistaking Natural For Intentional

On the flip side, there are industries and occupations that are hyper-aware of the possibility of foul play: banks, casinos, drug dealers, credit card issuers, pawn shops, couriers, accountants, etc. A never-ending history of fraud and theft have plagued these professions, leading to a higher level of suspicion in the possibility of foul play. If something weird happens on the floor of a casino, you can be sure the pit boss is watching out for a scam. When a large amount of money goes missing from an account, most bank managers probably are thinking about embezzlement alongside other, more benign possibilities.

This mindset is justified: the casino that isn’t proactively searching for people trying to cheat them won’t stay open for long. Likewise, the too trusting pawnbroker will have an unpleasantly short career. For these scam-prone industries, their paranoia is verified by experience, by the innumerable attempts, foiled and successful, to “bring down the house.”

We’ve already seen how the naive troubleshooter, closed to the possibility of foul play, can be blindsided. On the other hand, always suspecting malevolence can be a tiresome burden for you and your employees. Low-trust environments, where everyone is a always a suspect, are not conducive to getting the best out of your people.

Leaving Traces

Sometimes, it will be very difficult to tell the difference between an intentional failure and a “normal” one. If the person sabotaging you is smart, they may take great care to prevent detection and make the failure appear like it was naturally caused. As always, a heightened sense of awareness and being present can aid in the detection of foul play. Rich Kral, a veteran HVAC repairman, related this to me when I interviewed him about troubleshooting:

There have been times when I’ve questioned whether or not it [a breakdown] has been sabotage. You have a particular piece of machinery, and you open up the panel and think, “Someone else has been in here,” when you’re the only one who was supposed to have been in there. You can tell, almost by the energy, that someone else has been in the panel, because you put your screws in a certain way. I have a certain sense of feel for tightness and when they are so doggone tight that I can’t get them undone without stripping the screws, I think, “Somebody has done this, somebody else has been here, this is not me.” You know your own trail, you know your own path, you know where you have walked before, and you can sense when someone else is following you. It’s very real.

Rich Kral

Can you focus and tune into these nuances when a failure seems suspicious? What in a machine’s context seems out of place? Rich is clearly in touch with the details of a situation when he can tell by just the tension of the screws whether someone else has been “following his path.”

A Pattern Of Behavior

Goldfinger’s flat, hard stare didn’t flicker. He might not have heard Bond’s angry-gentleman’s outburst. The finely chiselled lips parted. He said, ‘Mr Bond, they have a saying in Chicago: “Once is happenstance. Twice is coincidence. The third time it’s enemy action.”’

Ian Fleming, Goldfinger 1

Sadly, the ambiguity between intentional and unintentional often means that multiple instances of sabotage may be required to definitively tell the difference. Good record-keeping is essential to begin piecing together the puzzle. For further examples, we turn to the real-life thriller The Cuckoo’s Egg by Clifford Stoll. In this compelling book, Stoll recounts his exploits of tracking a hacker that has penetrated the computer systems at Lawrence Berkeley National Laboratory. The tale starts out modestly, with the lab’s accounting system showing a very small discrepancy ($0.75!) in the billing records for the computer system:

The computer’s books didn’t quite balance; last month’s bills of $2,387 showed a 75-cent shortfall.

Now, an error of a few thousand dollars is obvious and isn’t hard to find. But errors in the pennies column arise from deeply buried problems, so finding these bugs is a natural test for a budding software wizard.

Clifford Stoll, The Cuckoo’s Egg 2

Notice that Stoll understandably assumes a benign cause as he begins his investigation: he thinks the cause of this bookkeeping error is a bug in the accounting software. This is a good example of the bias I was talking about before: we usually attribute failures to unintentional causes before considering more sinister explanations. My aim is to not change this basic belief, just to put sabotage on your list of possibilities.

Once you suspect sabotage, you will need to start collecting information (often from surveillance) to prove or disprove the theory. To make this happen, Stoll “liberates” every printer in the organization and unrolls his sleeping bag on the office floor:

All we’d need are fifty teletypes, printers, and portable computers. The first few were easy to get—just ask at the lab’s supplies desk. Dave, Wayne, and the rest of the systems group grudgingly lent their portable terminals. By late Friday afternoon, we’d hooked up a dozen monitors down in the switchyard. The other thirty or forty monitors would show up after the laboratory was deserted. I walked from office to office, liberating personal computers from secretaries’ desks. There’d be hell to pay on Monday, but it’s easier to give an apology than get permission.

Clifford Stoll, The Cuckoo’s Egg 2

The thing I like about The Cuckoo’s Egg is that Stoll’s zeal really comes through in his account. You get a good sense of the engrossing passion that accompanies the pursuit of someone who’s wronged you. I’ve felt these same emotions, having also been harmed by a saboteur at work. During that incident, I too felt like I would go to the ends of the earth to catch the perpetrator. Be careful though, while this passion can give you the energy to see an investigation through to the end, it needs to be tempered with the goal of justice. While Stoll clearly becomes obsessed with catching his hacker, he also involves law enforcement and coordinates his investigation with them. You should do the same.

When it comes to interpreting patterns of behavior, you might need to accelerate the process. If you want to make sure it’s actually sabotage, then decoys, honeypots, or traps should be on the table (warning: make sure what you’re doing is legal!). However, you’ll need to balance catching your nemesis with getting your regular job done. Stoll grapples with this tension as his preoccupation with the hacker begins to conflict with his other work responsibilities:

Lawrence Berkeley Laboratory was tired of wasting time on the chase. I hid my hacker work, but everyone could see that I wasn’t tending to our system. Scientific software slowly decayed while I built programs to analyze what the hacker was doing.

Clifford Stoll, The Cuckoo’s Egg 2

The Simplest Explanation

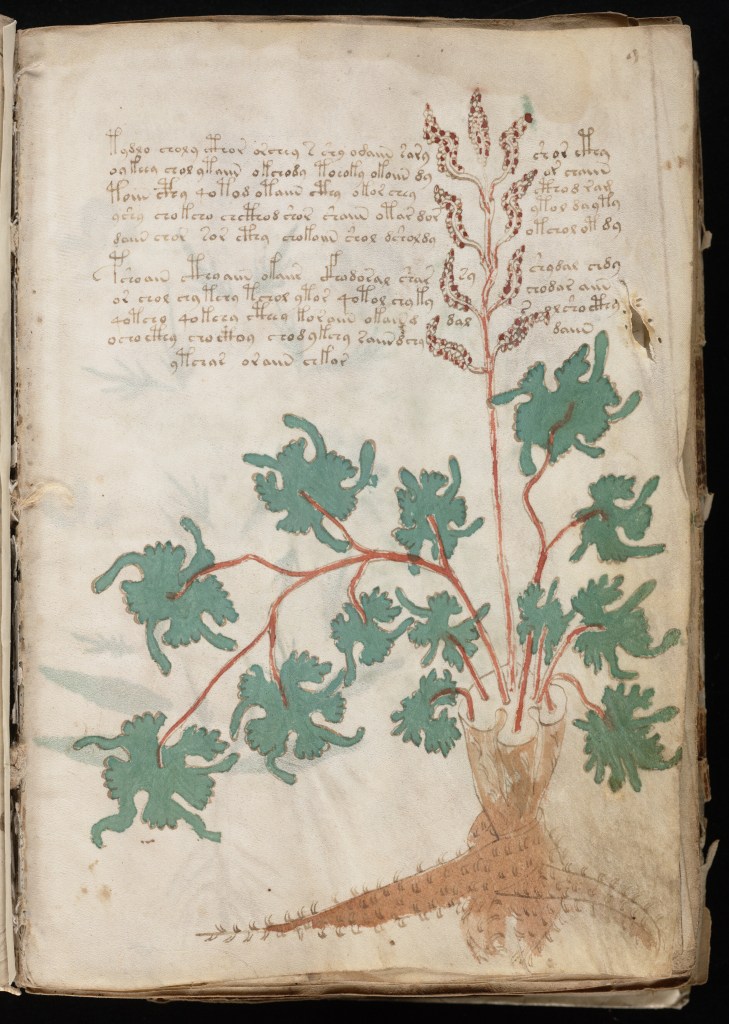

If you’re dealing with sabotage, but mentally exclude it as a possibility, you’re going to find yourself entertaining wild, fantastic theories of how A caused B. For a related example of the flights of fancy people embark on when something obvious is excluded, we turn to the repeated attempts to explain the fascinating Voynich Manuscript. The Voynich Manuscript is a book from the 15th century that contains an unknown language, interlaced with mysterious diagrams and artwork. What does it mean? Who wrote it? It’s captivated many people over the centuries.

Some of the best minds have tried to break its code, even up to the current era with the latest cryptographic methods. Then along came Gordon Rugg, a linguist at Keele University. Rugg’s work says that the manuscript means nothing, that it’s just a clever hoax!3 Not only that, but he also showed how a similar text could be created. Now, what’s more likely: that the text’s cipher is so well constructed that it continues to elude even the most advanced code-breaking techniques? Or, that it’s just a clever ruse? I love a good mystery, but my money would be on the latter.

Once you make the assumption that the Voynich Manuscript means something, you’re lead to believe some very unlikely things, among them that 500 years of scrutiny and advancements in cryptography still aren’t good enough to make sense of it! Rugg’s work may be brilliant, but the foundation of his breakthrough was elegantly simple: a willingness to dispense with the notion that it had meaning. Similarly, if you catch yourself entertaining bizarre theories about the cause of a failure while troubleshooting, make sure you double-check your key assumptions. Among the most important to reexamine is that something just “happened on its own.”

(image: Yale University Library)

Keep Good Records

When starting to investigate what you think is a case of sabotage, get out a notebook and start writing. What happened, when, and your response will be invaluable information as the situation evolves. When assessing why Stoll prevailed, it’s tempting to focus on the fact that he both was smart and determined, but his diligent documentation was equally important. He kept a logbook of everything that happened, constantly updating it as the investigation proceeded. This record allowed him to cross-check information, make correlations, and narrow the search. Oh yeah, and afterwards he got to turn it into a best-selling book. How’s that for an incentive?

Stoll’s record-keeping made a huge difference in the trial that eventually convicted Markus Hess, who was selling the information to the KGB. Between his logbook, phone traces, and printouts of Hess’ activity, the case was easily won:

How did I feel? Nervous, yet confident in my research. My logbook made all the difference. It was like presenting some observations to a room of astronomers. They may disagree with the interpretation, but they can’t argue with what you saw.

Clifford Stoll, The Cuckoo’s Egg 2

Picking Up The Pieces

Cleaning up after an act of sabotage is a messy thing. It’s going to have an entirely different feeling than a typical round of root cause analysis. The main difference is healing your violated trust. Obviously, if the culprit was one of your own, the impact on your staff’s morale will be much worse. Either way, your team’s cohesion will be tested both during the incident and after. People don’t like to be betrayed.

Not Dishonest, But Not Exactly Forthcoming Either

Deception lies along a spectrum: not everything will be so extreme as the active malevolence of a saboteur. There are many gray areas, like when someone just isn’t giving you the whole story. I’ve often encountered a reluctance to be forthcoming when interviewing people about a system failure. In my experience, it usually has to do with a feeling of embarrassment. A person may feel bad because they view themselves as partly to blame for a breakdown. They may also feel stupid that they didn’t know the “proper” way to operate a machine. If the situation borders on negligence, perhaps they want to avoid the consequences of being held accountable.

They may react to these feelings by being overly vague, curt, or by omitting details that implicate themselves. Dealing with this is tricky: if you push too hard for answers and back them into a corner, they may double down and start lying. At the same time, if they were partially responsible, you want to make sure they understand their role in the problem and how they can prevent it from happening in the future.

For milder cases where malice isn’t likely, I think there’s a middle way. I’ve investigated situations where a person was obviously feeling guilty and their behavior communicated embarrassment. Forcing them to say, “I was an idiot!” is a face losing proposition that isn’t necessary to make your point. Without making them admit guilt, I would launch into an impromptu training session regarding proper use of the system in question. While the subtext is “you were responsible and I’m teaching you the right way,” this allows them to graciously accept your guidance, free of shame.

References:

- Header image: Thew, R. & Fuseli, H. Shakespeare–Hamlet–Prince of Denmark / painted by H. Fuseli R.A.; engraved by R. Thew. 1796. London: published by J. & J. Boydell. [Photograph] Retrieved from the Library of Congress, https://www.loc.gov/item/95521789/.

- 1 Ian Fleming, Goldfinger (London: Penguin Books, 2004), pg. 166.

- 2 Clifford Stoll, The Cuckoo’s Egg: Tracking a Spy Through the Maze of Computer Espionage, (New York: Pocket Books, 2005), pgs. 3, 24, 195, 396.

- 3 Joseph D’Agnese, “Scientific Method Man,”Wired. September, 2004.

“Intentions don’t matter.” I recently realized this on my own, so it’s cool to read about it. I recently left a job where my manager had very poor judgement. It was his intention to a good job, but he would never ‘own up’ to his mistakes. It made it very confusing for me when he tried to blame his own mistakes on the machines!

LikeLike

Interesting point regarding your former boss. Tracing problems back to human choice is a big theme of my writing. The origin of machines is us, and so is the glory when they work and the blame when they fail!

LikeLike